How to Use rs-trafilatura with spider-rs

spider is a high-performance async web crawler written in Rust. It discovers, fetches, and queues URLs — but content extraction is left to you. rs-trafilatura slots in as the extraction layer, givi...

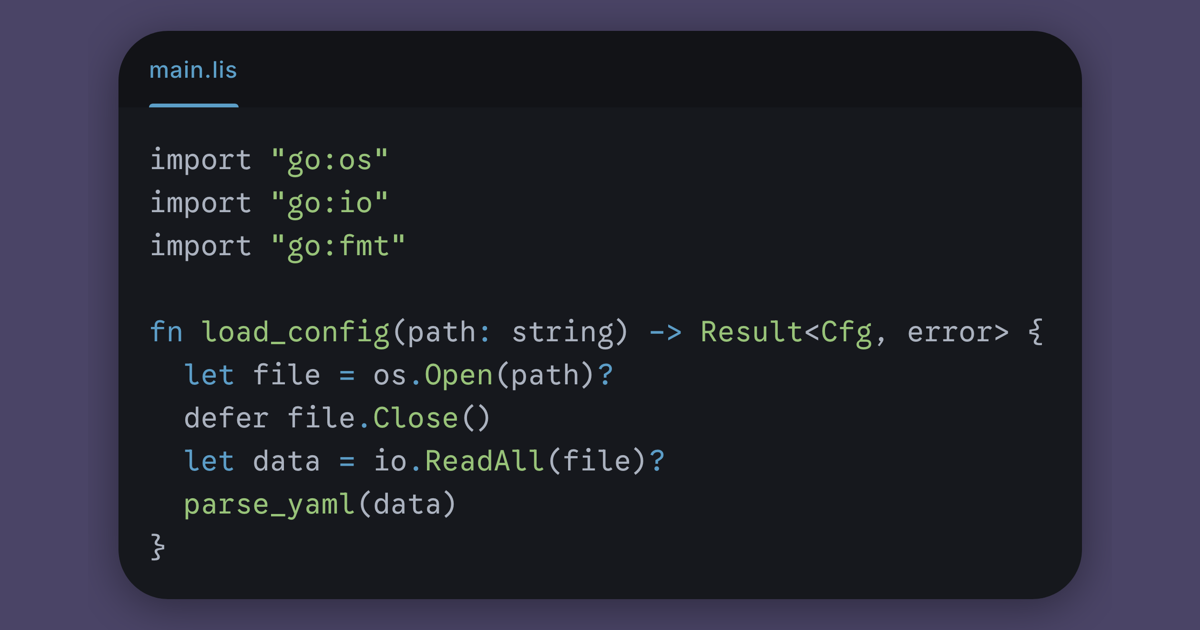

Source: DEV Community

spider is a high-performance async web crawler written in Rust. It discovers, fetches, and queues URLs — but content extraction is left to you. rs-trafilatura slots in as the extraction layer, giving you page-type-aware content extraction with quality scoring on every crawled page. Setup Add both crates to your Cargo.toml: [dependencies] rs-trafilatura = { version = "0.2", features = ["spider"] } spider = "2" tokio = { version = "1", features = ["full"] } The spider feature flag enables rs_trafilatura::spider_integration, which provides convenience functions that accept spider's Page type directly. Basic: Crawl Then Extract The simplest approach — crawl a site, then extract content from every page: use spider::website::Website; use rs_trafilatura::spider_integration::extract_page; #[tokio::main] async fn main() { let mut website = Website::new("https://example.com"); website.crawl().await; for page in website.get_pages().into_iter().flatten() { match extract_page(&page) { Ok(result