/insights, or Why the Analytics Developer Had No Analytics

TL;DR: You wouldn't run infrastructure without monitoring. You shouldn't run AI collaboration without it either. Claude Code's /insights command gives you a usage dashboard for your coding sessions...

Source: DEV Community

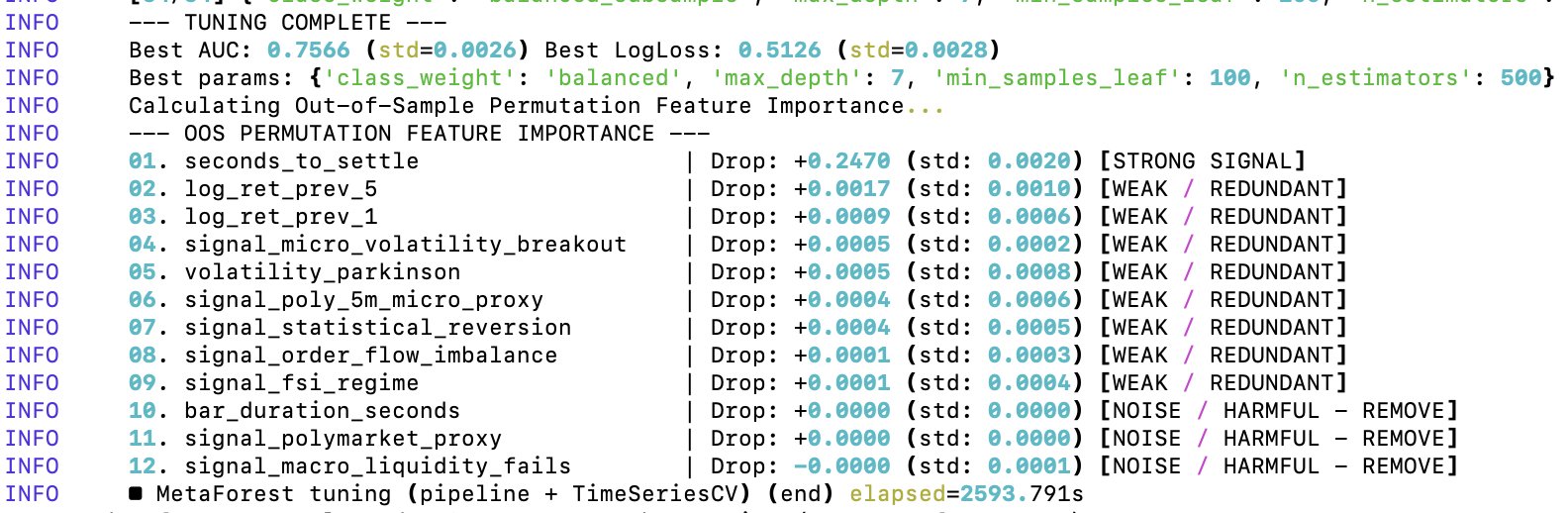

TL;DR: You wouldn't run infrastructure without monitoring. You shouldn't run AI collaboration without it either. Claude Code's /insights command gives you a usage dashboard for your coding sessions -- token costs, tool patterns, session behavior. What it reveals about how you actually work with AI is more interesting than the numbers themselves. Who this is for: Anyone using AI coding assistants who wants to understand their own collaboration patterns -- not just ship features. Works with any tool that exposes usage data, but the examples here use Claude Code. I've spent the last year building the operational infrastructure for AI-assisted development -- documentation systems, automated session handoffs, quality enforcement, custom agents. All of it focused on making AI collaboration reliable and repeatable across sessions and projects, but none of it focused on measuring whether it actually does. This from me, the agile evangelista who murmurs "outcomes over outputs" while brushing he